Knjige, o katerih se govori.

Premočne, da bi jih utišali. Predobre, da jih ne bi delili.

Najbolj pričakovana knjiga in dogodek letošnjega leta!

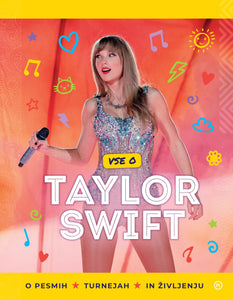

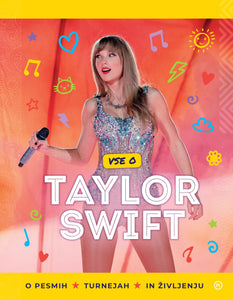

Vstopite v svet kraljice popa!

Vse o pesmih, turnejah in življenju Taylor Swift.

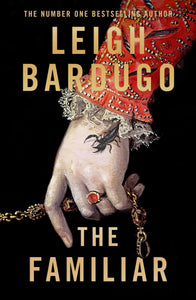

Nova knjiga slavne literarne mojstrice

Pisatelj, ki je prodrl v Putinov um in napisal uspešnico

Odkrijte svet pripravljeni

Poiščite navdih za izlete in potovanja.

VELIKO spomladansko znižanje!

Knjige, nahrbtniki, igrače, ...

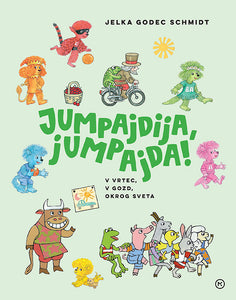

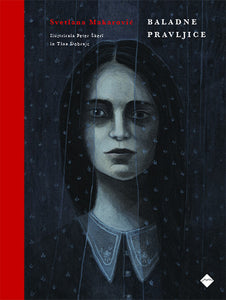

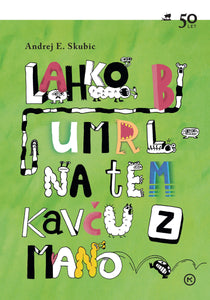

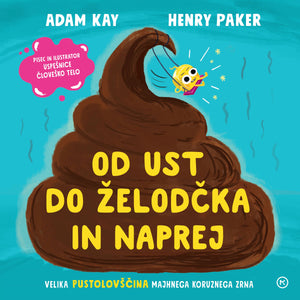

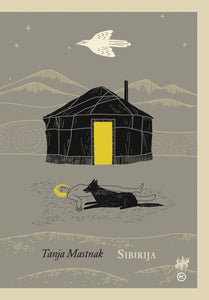

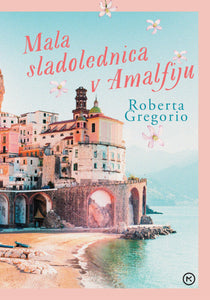

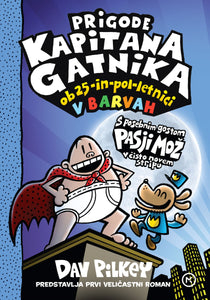

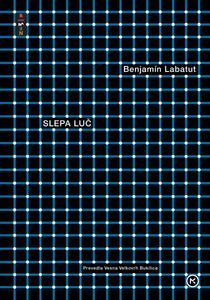

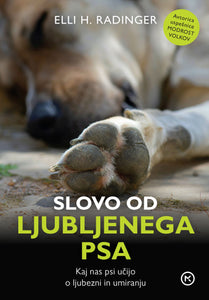

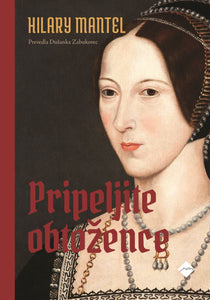

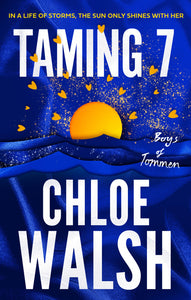

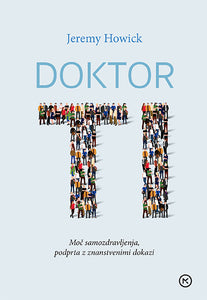

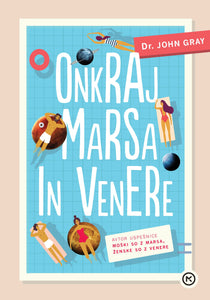

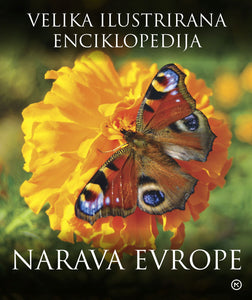

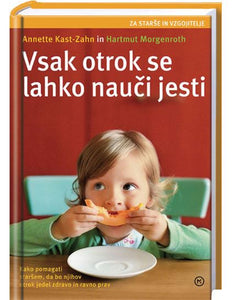

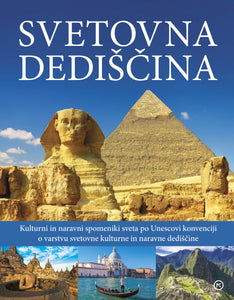

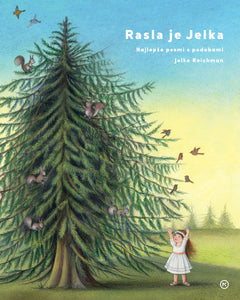

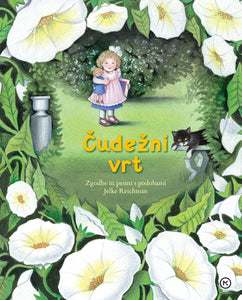

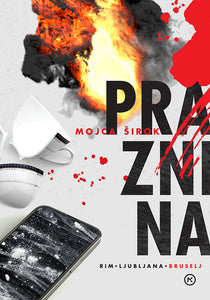

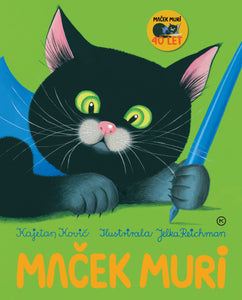

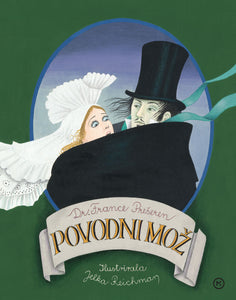

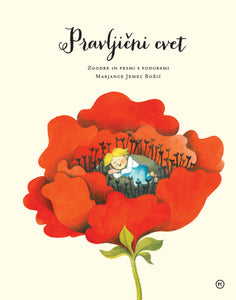

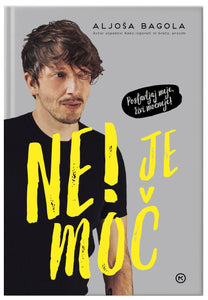

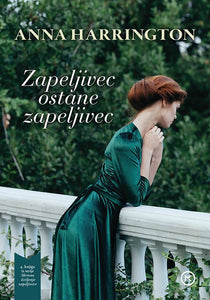

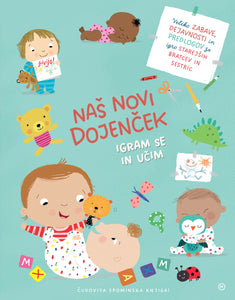

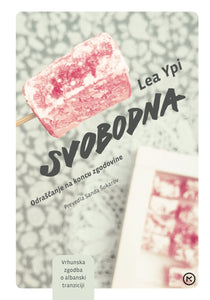

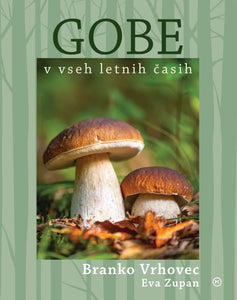

Nove knjige v ponudbi

Za vse, ki imate radi sveže zgodbe.

Vezava: Trda

Na zalogi v 34 poslovalnicah takoj ali preko spletnega naročila

Vezava: Trda

Na zalogi v 36 poslovalnicah takoj ali preko spletnega naročila

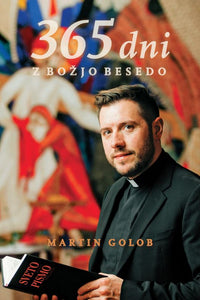

Vezava: Trda

Na zalogi v 34 poslovalnicah takoj ali preko spletnega naročila

Vezava: Trda

Na zalogi v 26 poslovalnicah takoj ali preko spletnega naročila

Vezava: Trda

Na zalogi v 33 poslovalnicah takoj ali preko spletnega naročila

Vezava: Trda

Na zalogi v 35 poslovalnicah takoj ali preko spletnega naročila

Vezava: Trda

Na zalogi v 32 poslovalnicah takoj ali preko spletnega naročila

Knjige, o katerih se govori

Premočne, da bi jih utišali. Predobre, da jih ne bi delili.

Vezava: Trda

Na zalogi v 46 poslovalnicah takoj ali preko spletnega naročila

Vezava: Trda

Na zalogi v 46 poslovalnicah takoj ali preko spletnega naročila

Vezava: Mehka

Na zalogi v 1 poslovalnicah takoj ali preko spletnega naročila

Vezava: Trda

Na zalogi v 34 poslovalnicah takoj ali preko spletnega naročila

Vezava: Trda

Na zalogi v 42 poslovalnicah takoj ali preko spletnega naročila

Vezava: Mehka

Na zalogi v 41 poslovalnicah takoj ali preko spletnega naročila

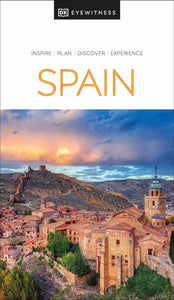

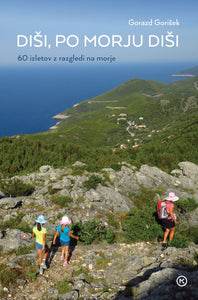

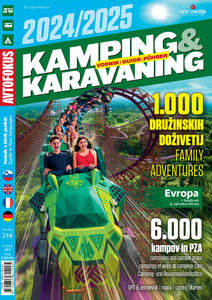

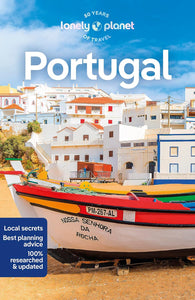

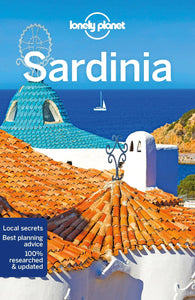

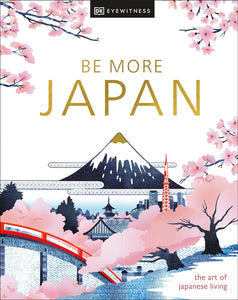

Aktualni turistični in popotniški vodniki

Turistični in popotniški vodniki po priljubljenih destinacijah

Vezava: Mehka

Na zalogi v 12 poslovalnicah takoj ali preko spletnega naročila

Vezava: Mehka

Na zalogi v 5 poslovalnicah takoj ali preko spletnega naročila

Vezava: Integralna

Na zalogi v 47 poslovalnicah takoj ali preko spletnega naročila

Vezava: Integralna

Na zalogi v 48 poslovalnicah takoj ali preko spletnega naročila

Vezava: Mehka

Na zalogi v 10 poslovalnicah takoj ali preko spletnega naročila

Vezava: Mehka

Na zalogi v 8 poslovalnicah takoj ali preko spletnega naročila

Vezava: Mehka

Na zalogi v 10 poslovalnicah takoj ali preko spletnega naročila

Vezava:

Na zalogi v 1 poslovalnicah takoj ali preko spletnega naročila

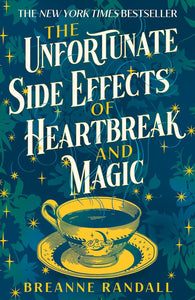

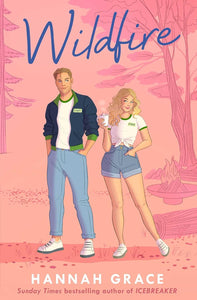

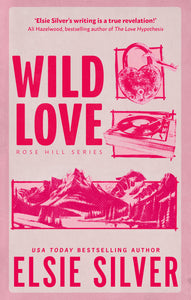

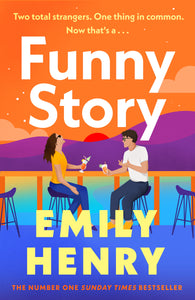

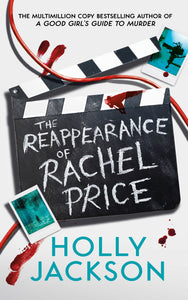

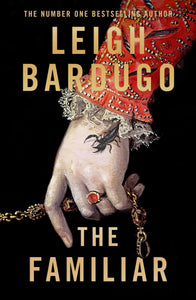

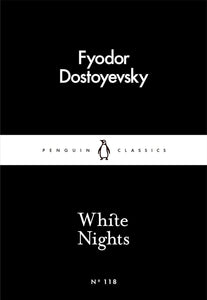

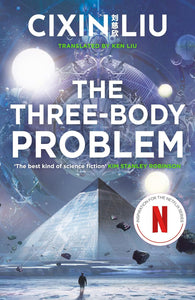

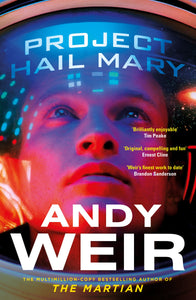

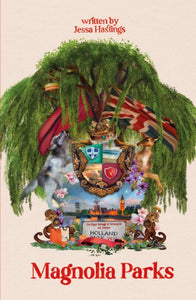

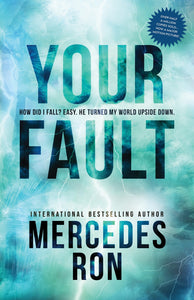

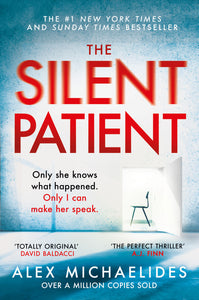

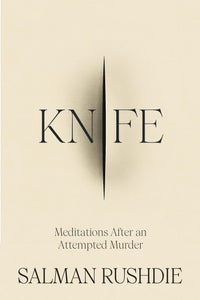

Knjižne novosti iz Velike Britanije in ZDA

Novosti v angleškem jeziku

Vezava: Mehka

Na zalogi v 3 poslovalnicah takoj ali preko spletnega naročila

Vezava: Mehka

Na zalogi v 8 poslovalnicah takoj ali preko spletnega naročila

Vezava: Mehka

Na zalogi v 1 poslovalnicah takoj ali preko spletnega naročila

Vezava: Mehka

Na zalogi v 1 poslovalnicah takoj ali preko spletnega naročila

Vezava: Mehka

Na zalogi v 12 poslovalnicah takoj ali preko spletnega naročila

Vezava: Mehka

Na zalogi v 1 poslovalnicah takoj ali preko spletnega naročila

Znižanke

Knjige, nahrbtniki, igrače, ...

Vezava: Integralna

Na zalogi v 38 poslovalnicah takoj ali preko spletnega naročila

Na zalogi v 40 poslovalnicah takoj ali preko spletnega naročila

Na zalogi v 39 poslovalnicah takoj ali preko spletnega naročila

Na zalogi v 41 poslovalnicah takoj ali preko spletnega naročila

Na zalogi v 41 poslovalnicah takoj ali preko spletnega naročila

Na zalogi v 45 poslovalnicah takoj ali preko spletnega naročila

Na zalogi v 34 poslovalnicah takoj ali preko spletnega naročila

Knjige s posvetilom in podpisom avtorja

Pobrskajte med ponudbo podpisanih izvodov naših najbolj priljubljenih avtorjev in knjigo s posvetilom podarite sebi ali svojim najdražjim.

Vezava: Integralna

Na zalogi v 48 poslovalnicah takoj ali preko spletnega naročila

Vezava: Trda

Na zalogi v 47 poslovalnicah takoj ali preko spletnega naročila

Vezava: Trda

Na zalogi v 46 poslovalnicah takoj ali preko spletnega naročila

Vezava: Trda

Na zalogi v 47 poslovalnicah takoj ali preko spletnega naročila

Vezava: Trda

Na zalogi v 47 poslovalnicah takoj ali preko spletnega naročila

Vezava: Trda

Na zalogi v 49 poslovalnicah takoj ali preko spletnega naročila

Vezava: Trda

Na zalogi v 23 poslovalnicah takoj ali preko spletnega naročila

Vezava: Trda

Na zalogi v 47 poslovalnicah takoj ali preko spletnega naročila

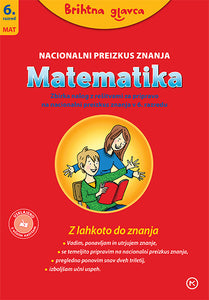

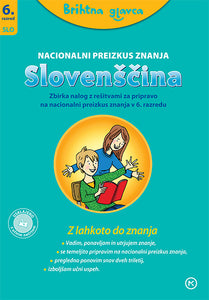

Ponovim. Utrdim. Znam!

Z odličnimi zbirkami nalog, usklajenimi z učnimi načrti. Tudi za pripravo na NPZ!

Vezava: Mehka

Na zalogi v 43 poslovalnicah takoj ali preko spletnega naročila

Vezava: Broširana

Na zalogi v 43 poslovalnicah takoj ali preko spletnega naročila

Vezava: Broširana

Na zalogi v 44 poslovalnicah takoj ali preko spletnega naročila

Vezava: Broširana

Na zalogi v 42 poslovalnicah takoj ali preko spletnega naročila

Vezava: Broširana

Na zalogi v 43 poslovalnicah takoj ali preko spletnega naročila

Vezava: Broširana

Na zalogi v 42 poslovalnicah takoj ali preko spletnega naročila

Vezava: Mehka

Na zalogi v 44 poslovalnicah takoj ali preko spletnega naročila

Vezava: Mehka

Na zalogi v 45 poslovalnicah takoj ali preko spletnega naročila

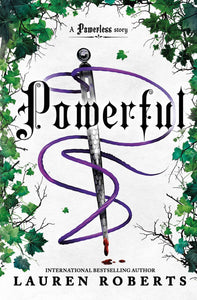

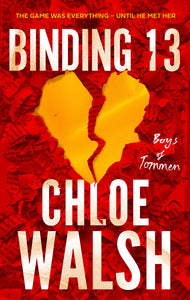

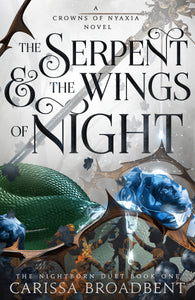

#BookTok

Knjižne uspešnice na TikToku

Vezava: Mehka

Na zalogi v 1 poslovalnicah takoj ali preko spletnega naročila

Vezava: Mehka

Na zalogi v 2 poslovalnicah takoj ali preko spletnega naročila

Vezava: Mehka

Na zalogi v 2 poslovalnicah takoj ali preko spletnega naročila

Vezava: Mehka

Na zalogi v 2 poslovalnicah takoj ali preko spletnega naročila

Vezava: Mehka

Na zalogi v 1 poslovalnicah takoj ali preko spletnega naročila

Vezava: Trda

Na zalogi v 2 poslovalnicah takoj ali preko spletnega naročila

Vezava: Mehka

Na zalogi v 9 poslovalnicah takoj ali preko spletnega naročila

Vezava: Mehka

Na zalogi v 9 poslovalnicah takoj ali preko spletnega naročila

>

>

>

>

>

>

>

>

>

>